Slow IID test progress

Indicators for successful slow IID test.

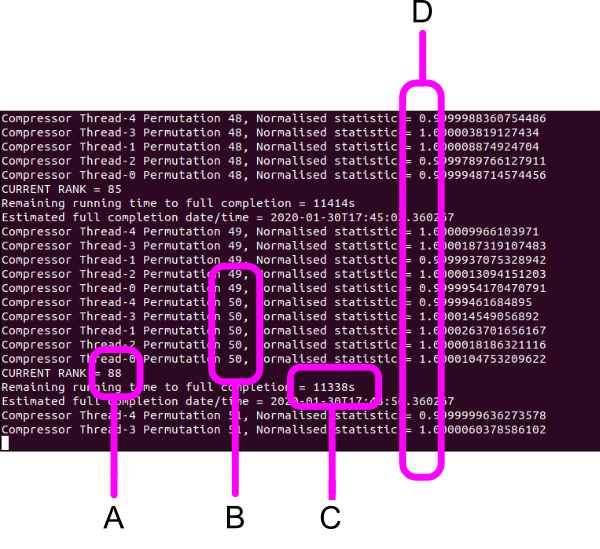

A. Current rank of normalised test statistic (number of permutations with a compression ratio < 1.0). This should be quickly tending towards 100. 10 MB of /dev/urandom output tests successfully in 10 - 20 minutes depending on the luck of your draw.

B. Current permutation number. Total number of permutations = permutation number x no. of utilised CPU cores (total physical cores - 1).

C. Number of seconds remaining for the test to fully complete with all 10,000 permutations. If the rank is not quickly tending towards 100, it is just as well to abort the test and amend the $(\epsilon, \tau)$ sampling methodology. Increase the sampling resolution ($\downarrow \epsilon$), drop sample bits $(\downarrow N_{\epsilon} )$ or increase the sample interval ($\uparrow \tau$). Otherwise, an entropy estimate by strong compression will be required as the samples will be non-IID. This will result in much lower bounds of certainty for $H$.

D. The normalised test statistic (NTS). Ideally for good IID samples, NTS will flip between > 1 and < 1 (but very close around 1.0), or the leading digit will oscillate randomly between 1 and 0. Such oscillation will allow the current rank to quickly trend to 100. NTS will mainly remain > 1 for data samples that do not exhibit IID characteristics as they will not compress as well when randomly permuted. Rather than waiting for full test completion (C) in that case, the test can be aborted and the sampling methodology amended. If you get something akin to Normalised statistic = 2.3935196265528544, find a better entropy source.